Lately, I’ve been thinking about a fundamental shift in the architecture of children’s media.

Lately, I’ve been thinking about a fundamental shift in the architecture of children’s media.

For years, our national conversation has centered on screen time and online safety. Both remain critical. Yet neither fully captures the transformation now underway, namely, the shift toward systems that are ambient, always-on, and increasingly intimate – via unprecedented access to children’s inner lives through data that are both intentionally shared and inferred from behavior.

As we stand at this crossroads, we are also witnessing a historic legal reckoning. Recent rulings against major platforms have signaled what some are calling a “tobacco industry moment” for tech. For the first time, juries have found companies liable not for the third-party content on their platforms, but for the product architecture itself, namely, the intentional design of features built to maximize engagement at the cost of child well-being.

The message from the courts is: engagement, when uncoupled from developmental health, becomes a liability.

Engines of Extraction

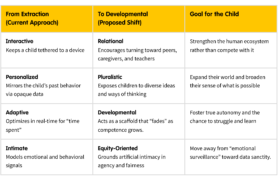

If we simply transfer the old “engagement-first” playbook to generative AI, we are building even more effective engines of extraction – tools designed to harvest data and attention, primarily to benefit the provider. Here are four distinct affordances to help us think about the trajectory of the field:

If we simply transfer the old “engagement-first” playbook to generative AI, we are building even more effective engines of extraction – tools designed to harvest data and attention, primarily to benefit the provider. Here are four distinct affordances to help us think about the trajectory of the field:

- Interactive: Continuous feedback loops designed to keep children engaged.

- Personalized: Experiences are shaped by opaque data mirrors of children’s past behavior, such as their interests and usage patterns.

- Adaptive: Systems optimize in real-time for “time spent.”

- Intimate: AI interprets and responds to children’s emotional and behavioral signals, drawing on both intentional disclosures and inferred personal data.

Without a developmental lens, these become tools for retention rather than for children’s growth.

Designing for Development

To move from extraction to development, we are proposing a shift in how we define the core affordances of AI. Click to view

At the Joan Ganz Cooney Center, we support a different underlying logic that prioritizes development over extraction. These are not hypothetical risks, because these affordances already exist within the AI products children are using today, and could be deployed without the developmental support that might make them beneficial or, at a minimum, safe. We know that children are already encountering AI, and the question is whether we can intervene before the default approach scales.

I propose a shift in our design vocabulary:

- From Interactive to Relational: Instead of an AI that keeps a child tethered to a device, we need systems that encourage the child to turn toward the people around them – peers, caregivers, and teachers. A relational system strengthens the human ecosystem rather than competing with it.

- From Personalized to Pluralistic: Most personalization today is just a mirror that reflects a child’s past behavior back at them. Instead, we need a pluralistic approach that expands a child’s world by introducing new perspectives and diverse ways of thinking.

- From Adaptive to Developmental: “Seamless” assistance often robs children of the chance to struggle and learn. A developmental system acts as a scaffold that is present when needed but intentionally “fades” as the child gains competence, fostering true autonomy.

- From Intimate to Equity-Oriented: As AI systems begin to infer and interpret children’s emotions, we must move away from a surveillance model that treats their inner lives as mere data. An equity-oriented approach ensures that AI systems are transparent, earn a bounded level of “artificial intimacy,” and give children meaningful agency over how their most personal information is interpreted and used.

The Necessity of Legibility

This shift requires one more essential condition: legibility. The “black box” nature of current AI (and much of modern technology) is exactly what makes it so difficult for parents and regulators to trust. A developmental approach requires that a child understand, in age-appropriate ways, why a system responds the way it does. Transparency is a precondition for agency.

A Moral Choice for the Field

The lawsuits of 2026 demonstrate just one cost of getting this wrong. Today, we need a new North Star that focuses on designing for children’s benefit. We know the field will continue to build more intelligent systems, but the bigger question is whether they can also design them with children’s long-term needs in mind.

But litigation risk alone isn’t an aspirational signal. For that, we can turn to early evidence that suggests that products designed with developmental principles—systems that build competence, expand perspective, and strengthen relationships—are more engaging over time. The companies investing in this architecture are the ones building trust. This direction is where we believe the field can head if it chooses to, and the work the Center exists to do.